Have you ever caught yourself apologizing to a stubborn printer or whispering apologies to a glitchy laptop, as if these inanimate objects could hear—or worse, care? Welcome to the curious quirk of anthropomorphism: the human tendency to imbue non-human entities with human-like qualities, intentions, and emotions. From ancient myths where rivers wept and winds whispered to modern-day AI assistants that “feel” like friends, our brains are wired to see humanity everywhere. But why does this happen? What cognitive machinery drives us to project our inner world onto the outer one? And what happens when this instinct backfires, blurring the line between reality and illusion?

The Brain’s Overactive Imagination: Pattern-Seeking Machines at Work

At the heart of anthropomorphism lies a fundamental feature of human cognition: our brain’s relentless pursuit of patterns. Evolutionarily, survival depended on recognizing faces in the shadows or detecting agency in rustling bushes—even if the rustling was just the wind. This hyperactive pattern-recognition system, known as pareidolia, often leads us to see human-like forms where none exist. A cloud resembling a dragon, a rock shaped like a face, or the infamous “Man in the Moon” are all manifestations of this quirk.

Neuroscientifically, this phenomenon is rooted in the fusiform face area (FFA), a region of the brain that specializes in facial recognition. When we encounter ambiguous stimuli, the FFA leaps into action, interpreting fragments as faces. This isn’t just a quirk of perception; it’s a survival mechanism. Mistaking a shadow for a predator is less costly than overlooking one. Thus, anthropomorphism is less about imagination and more about the brain’s default setting to err on the side of caution—even when the “threat” is a toaster that won’t pop.

The Social Brain Hypothesis: Why We Can’t Help But Humanize

Anthropomorphism isn’t just a visual trick; it’s a social one. Humans are inherently social creatures, and our brains are optimized for navigating complex social landscapes. The social brain hypothesis suggests that our cognitive abilities evolved to manage relationships, alliances, and hierarchies. To do this effectively, we rely on theory of mind—the ability to attribute mental states to others.

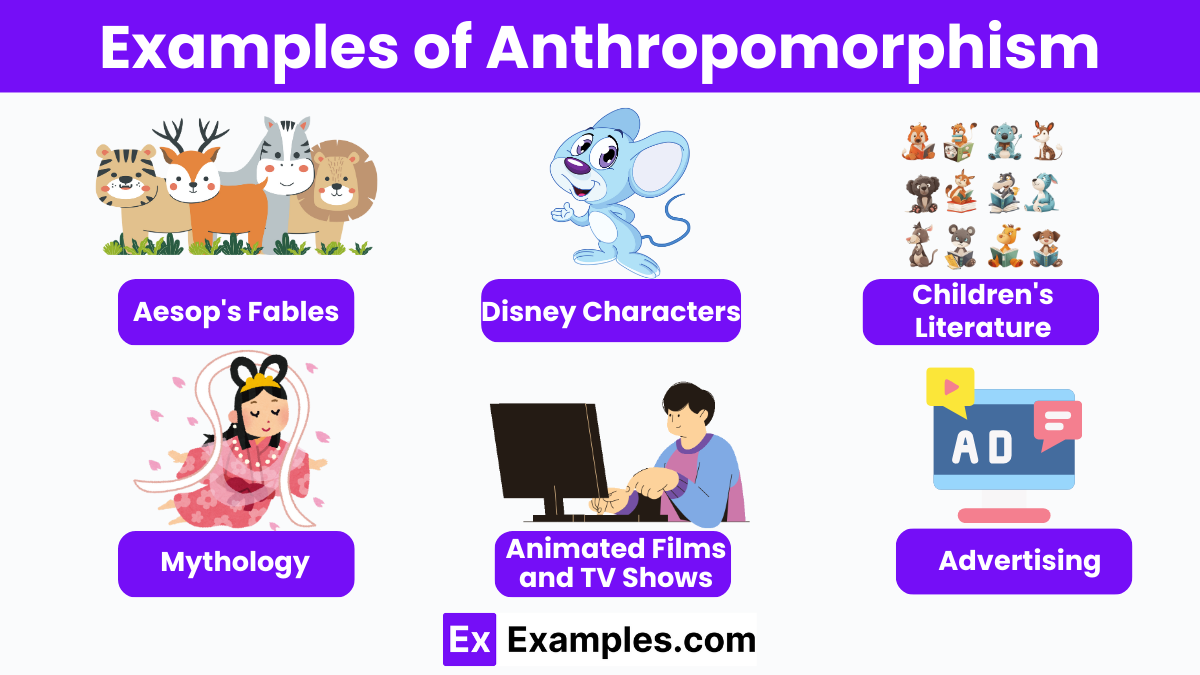

Here’s the twist: our theory of mind doesn’t discriminate. It extends beyond humans to pets, robots, and even fictional characters. When we anthropomorphize, we’re essentially treating non-human entities as social actors, allowing us to predict their behavior using the same mental models we use for people. A chatbot that “understands” us isn’t just a program; it’s a conversational partner. A Roomba that “avoids” obstacles becomes a stubborn pet. This social lubrication makes the world feel more predictable and less alien, even when the entities in question have no inner life.

The Empathy Paradox: When Anthropomorphism Backfires

While anthropomorphism can foster connection, it also carries risks. The same empathy that makes us care for a virtual assistant can lead to misplaced emotional investment. Consider the phenomenon of digital grief, where people mourn the loss of a beloved AI companion as if it were a living being. Or the ethical dilemmas posed by anthropomorphizing AI—should we hold machines accountable for “mistakes,” or is that a dangerous distortion of responsibility?

Moreover, anthropomorphism can distort our judgment. Studies show that people are more likely to trust a robot with a human-like face, even when it’s objectively less competent than a faceless machine. This uncanny valley effect—where near-human likeness triggers discomfort—reveals how finely tuned our social instincts are. Too much humanity in the wrong place can feel eerie, unsettling, or even threatening. The challenge, then, is to harness anthropomorphism’s benefits while guarding against its pitfalls.

The Role of Emotion: Why We Feel for Inanimate Objects

Anthropomorphism isn’t just a cognitive exercise; it’s an emotional one. Our brains are wired to respond to perceived suffering, joy, or intent, regardless of the entity’s actual state. This is why we feel frustration when a GPS reroutes us unnecessarily or relief when a video game character “survives” a perilous jump. The affective forecasting system, which predicts how future events will make us feel, doesn’t distinguish between real and imagined outcomes.

This emotional investment is why brands invest heavily in creating “personality” for their products. A car isn’t just a machine; it’s a “trusted companion” on life’s journey. A smartphone isn’t a tool; it’s a “friend” that “knows” us. By anthropomorphizing, companies tap into our innate desire for connection, making products feel more relatable and desirable. Yet, this emotional manipulation can also lead to consumer disillusionment when the product fails to live up to its “promise” of companionship.

Cultural and Developmental Influences: How Society Shapes Anthropomorphism

Anthropomorphism isn’t universal; it’s shaped by culture and upbringing. In animistic cultures, where nature is imbued with spirits and intentions, anthropomorphism is a natural way of understanding the world. Conversely, in highly rational or scientific societies, anthropomorphism may be viewed as childish or superstitious. Even within cultures, individual differences play a role. Children, with their vivid imaginations, anthropomorphize far more readily than adults, while adults with higher levels of systemizing (a cognitive style focused on rules and patterns) may anthropomorphize less.

Developmentally, anthropomorphism serves as a bridge between the concrete and the abstract. A child who believes their stuffed animal is “alive” is practicing the skills needed to navigate a complex social world. As we grow, we learn to temper this instinct, but it never fully disappears. Even in adulthood, we retain a latent tendency to see the world through a social lens—one that colors our perceptions, decisions, and interactions.

The Future of Anthropomorphism: AI, Ethics, and the Blurring of Lines

As technology advances, anthropomorphism is taking on new dimensions. AI systems are becoming increasingly human-like, from voice assistants that mimic empathy to robots designed to resemble humans. This raises critical ethical questions: Should AI be designed to exploit our anthropomorphic instincts? Can we trust our emotional responses to machines that are, at their core, sophisticated algorithms?

The challenge lies in balancing innovation with responsibility. While anthropomorphism can make technology more accessible and user-friendly, it can also obscure the true nature of these systems. A chatbot that “feels” like a friend isn’t actually experiencing emotions; it’s simulating them. The danger is that we forget this distinction, leading to over-reliance on or misplaced trust in technology. As we move toward a future where AI permeates every aspect of life, understanding the cognitive roots of anthropomorphism will be crucial in ensuring we use it wisely.

Conclusion: A Double-Edged Sword Worth Wielding

Anthropomorphism is a testament to the human brain’s creativity and adaptability. It’s a cognitive shortcut that turns the unfamiliar into the familiar, the inanimate into the animate, and the mechanical into the social. Yet, like any tool, it has its limits and dangers. The key is to recognize when we’re anthropomorphizing—not to suppress the instinct, but to use it thoughtfully.

So the next time you feel a pang of guilt for yelling at your computer, remember: your brain is just doing what it’s wired to do. It’s seeing a pattern, predicting agency, and trying to make sense of a world that’s often too complex to navigate alone. The challenge isn’t to stop anthropomorphizing; it’s to do it with awareness, ensuring that our tendency to see humanity everywhere doesn’t blind us to the very real differences that define our world.